Evidence-First Delivery is here. AI-assisted development makes code cheap and change volume effectively unbounded. Human attention does not scale the same way. If your delivery process still assumes that a person will read most lines that ship, you will end up with one of two outcomes: a review queue that becomes your limiting reagent, or “rubber stamp review” that provides the feeling of safety without the reality.

The replacement is not “no review.” The replacement is a different object of review.

A scalable process treats every change as a claim and requires machine-checkable evidence proportional to the change’s risk. Humans review intent, risk, interfaces, and evidence, not raw diffs by default.

This article proposes an approach I’ll call Evidence-First Delivery (EFD): risk-gated automation, attestable build provenance, and progressive delivery as the primary safety system.

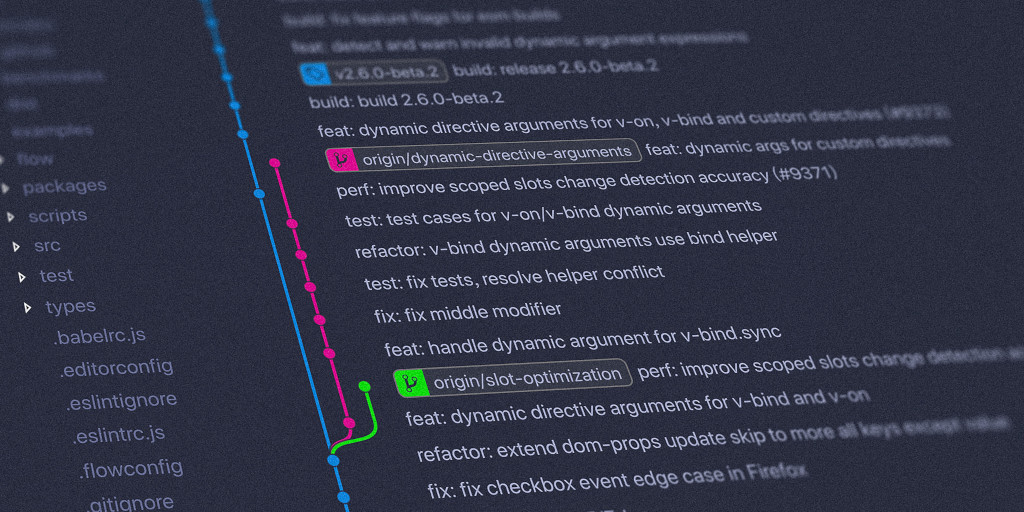

Why classic PR review fails at machine-speed coding

Line-by-line review was never a strong correctness proof. It was a pragmatic filter:

- It catches obvious mistakes and missing edge cases.

- It spreads context.

- It enforces local conventions.

But as a primary gate, it is mismatched to modern failure modes:

- Runtime behavior does not fit in a diff. Concurrency, partial failures, load, and configuration interactions are where incidents live. A diff review can’t “execute the system.”

- The signal-to-noise ratio collapses with volume. If changes become plentiful, reviewers skim. Skimming is not a strategy, it is a symptom.

- Security and supply chain risk are not diff-shaped. Dependency changes, build steps, provenance, and permissions often matter more than the code you touched.

This is why mature safety guidance for software development emphasizes repeatable practices, automation, and evidence collection, not “a human read it” as the core control. NIST’s Secure Software Development Framework (SSDF) is explicit about integrating secure practices throughout the SDLC and producing artifacts as records of those practices. (NIST Publications)

So if the old control is weak and expensive, the scalable move is to replace it with controls that are:

- cheaper than humans,

- harder to bypass,

- measurable, and

- proportional to risk.

Evidence-First Delivery in one sentence

Every change must ship with a signed “evidence bundle” whose required contents are determined by an automated risk score, and production rollouts must be progressive and reversible.

That is the core upgrade over the common “shift review earlier” idea. Specs and plans matter, but a spec without enforceable evidence is still an opinion.

Evidence-First Delivery: The Six Principles

1) Intent is an artifact, not a meeting

For every change that is not trivial, require a short, structured intent document in-repo. Think of it as change.md or plan.yaml, with:

- user-visible behavior and non-goals

- invariants (what must never happen)

- interfaces touched (APIs, schemas, permissions)

- rollback strategy

- observability additions (metrics, logs, traces)

- risk factors (data migration, auth, external deps)

This is not bureaucracy if it is used as an input to automation. It becomes the root of your evidence bundle.

NIST SSDF’s emphasis on defined outcomes and maintaining artifacts aligns with this “intent becomes an auditable input” stance. (NIST Publications)

2) Risk-score the change, then scale the required proof

Most teams gate merges uniformly: every PR needs the same ritual. That does not scale.

Instead compute a risk score for each change. A simple v1 is a weighted sum of:

- subsystems touched (auth, billing, crypto, data plane)

- blast radius (public API, schema, config, infra)

- novelty (new dependency, new permission, new service)

- change size and churn (lines changed, files changed)

- historical defect density of touched areas

- rollout difficulty (can it be feature-flagged, can it be canaried)

Then map score → required controls:

- Low risk: auto-merge if evidence bundle is complete and green.

- Medium risk: require an “interface review” by an owner plus stronger automated evidence (contracts, perf check).

- High risk: require design review plus deeper adversarial verification (fuzzing, chaos test, migration rehearsal) and progressive rollout.

This turns human review into an exception path driven by risk, not a default tax.

3) Replace “reviewing code” with “reviewing invariants”

One major motivation for Evidence-First Delivery is that humans are bad at exhaustive diff inspection but good at identifying:

- missing constraints

- dangerous interface changes

- ambiguous requirements

- incorrect threat models

So move the center of gravity to mechanically checkable invariants:

- API contracts (OpenAPI/Proto schemas, compatibility rules)

- database migration rules (forward-only, reversible where possible)

- authorization invariants (least privilege, explicit approvals)

- data integrity rules and idempotency rules

Contract testing is a practical way to make interface expectations executable. Pact is a widely used consumer-driven contract testing tool that generates contracts from consumer tests and verifies providers against them.

Property-based testing is another way to encode invariants. Hypothesis, for example, generates test cases from strategies to explore edge conditions beyond hand-written examples.

4) Continuous fuzzing and adversarial validation are baseline controls

Agents and humans both overfit to “the tests we thought of.” You want systematic ways to find inputs you did not imagine.

Coverage-guided fuzzing exists for exactly this reason. LLVM’s libFuzzer is an in-process, coverage-guided fuzzing engine that mutates inputs to maximize coverage. (llvm.org)

For practical adoption, the model to copy is continuous fuzzing integrated into CI. Google’s OSS-Fuzz describes the approach as combining modern fuzzing with scalable execution, and Google’s ClusterFuzzLite announcement positions CI-integrated fuzzing as a way to catch issues before changes land. (Google GitHub)

Add a simple rule: if the risk score is high and the code touches parsers, protocol handling, deserialization, or security boundaries, fuzzing evidence is required.

5) Provenance is part of correctness

At machine speed, the question “is this artifact what we think it is?” becomes central.

Do two things:

- Generate and attach build provenance. SLSA defines a framework of controls to prevent tampering and improve integrity across the build and supply chain.

- Use attestations that can be verified independently. The in-toto attestation framework defines a statement format for communicating what steps were performed in a supply chain, and SLSA provenance is one predicate type within that ecosystem.

Then sign the outputs. Cosign is a common tool in the Sigstore ecosystem for signing artifacts stored in registries, and its documentation demonstrates signing and associating provenance metadata.

Finally, generate an SBOM as a first-class artifact. CISA describes an SBOM as a nested inventory or “list of ingredients” for software components.

If you need standards, SPDX and CycloneDX are two dominant SBOM formats; CycloneDX’s specification overview describes representing software components and related metadata. (cyclonedx.org)

Practical implication: the “evidence bundle” is not just tests. It includes supply chain evidence.

6) Production is a controlled experiment: progressive delivery and fast rollback

If you stop treating diff review as the safety net, you must upgrade how you ship.

- Canary releases: Google’s SRE guidance describes testing changes on a small portion of traffic to gain confidence before full rollout.

- Feature flags: Feature toggles allow trunk-based workflows by letting incomplete paths ship without being enabled.

- Observable systems: You need correlation across telemetry signals to decide “roll forward vs rollback” quickly. OpenTelemetry’s logs specification describes including resource context and enabling correlation with traces and metrics.

Operationally, EFD assumes: ship small, observe hard, revert fast. Without this, you are simply moving risk from pre-merge to post-merge without a safety harness.

Evidence-First Delivery: What the “evidence bundle” contains

A good evidence bundle is a single artifact attached to each merge (and ideally each deploy), including:

- Intent artifact

- plan file link/hash

- acceptance criteria

- rollback plan

- Risk score and rationale

- computed score and which signals drove it

- Verification results

- unit and integration tests

- contract tests (when interfaces change)

- property-based tests for invariants

- fuzzing summary (when applicable)

- static analysis and dependency scanning outputs

- Supply chain artifacts

- signed build provenance (SLSA-style provenance where feasible)

- SBOM (SPDX or CycloneDX)

- signature verification results

- Release safety artifacts

- canary analysis thresholds

- SLO impact guardrails

- feature flag references and kill-switch location

Humans should be able to review this in 2 to 5 minutes for most changes.

Evidence-First Delivery: How to enforce it with industry-standard tooling

Policy-as-code gate in CI/CD

Open Policy Agent (OPA) explicitly supports using policy-as-code guardrails in CI/CD pipelines. (Open Policy Agent)

Your policy can express rules like:

- deny merge if risk is high and no design review is linked

- deny merge if an external dependency is added without an SBOM update

- deny merge if provenance or signatures are missing

- require specific test classes for specific subsystems

Branch and merge rules

On GitHub, rulesets and branch protection can require status checks and, if you choose, approvals. Required status checks ensure CI tests pass before changes are allowed.

Opinionated guidance: keep “required approval” turned on only for the high-risk paths. Make your default gate be evidence.

Trunk-based development as the workflow foundation

Trunk-based development is repeatedly identified as a required practice for continuous integration, alongside fast automated tests.

If you stay on long-lived branches and large PRs, you will fight your own system. EFD wants small, frequent, low-risk changes.

Evidence-First Delivery: A concrete pipeline blueprint

Here is a reference flow that works for most teams:

- Author creates intent artifact

plan.yamlwith invariants, rollout, risks

- Automated risk scoring

- CI computes score from repo metadata and diff

- Verification matrix runs based on risk

- low risk: unit, lint, SCA, basic integration

- medium: + contract tests, targeted perf check

- high: + fuzzing, migration rehearsal, chaos test, security checks

- Evidence bundle is assembled

- store it as a build artifact

- include hashes of inputs and outputs

- Provenance + SBOM are generated and signed

- provenance aligned to SLSA concepts.

- SBOM per CISA definition, in SPDX or CycloneDX.

- sign artifacts (cosign).

- Policy-as-code evaluates

- OPA evaluates the bundle and denies or allows merge.

- Progressive delivery

- feature flag default off for risky behavior.

- canary to small traffic slice with automated rollback thresholds.

- required telemetry correlation to support fast diagnosis.

What humans do in the EFD world

Human effort moves up the abstraction stack:

- Review the intent artifact for ambiguity and missing invariants.

- Review interface changes (schemas, APIs, permissions).

- Review the risk score if it looks wrong.

- Review evidence summaries, not raw diffs:

- what tests ran

- what changed in contracts

- what fuzzing found

- what dependencies changed

- what the rollout plan is

Code reading still happens, but it is targeted:

- high-risk diffs

- novel algorithms

- security boundaries

- performance critical paths

This is not “no review.” It is review where humans add unique value.

How to know it is working: measure throughput and stability

Use delivery metrics to validate that you are not trading speed for quality.

DORA defines throughput and instability measures such as lead time for changes, deployment frequency, time to restore service, and change fail rate (and also discusses deployment rework rate).

If EFD is implemented correctly:

- lead time decreases because merges stop waiting on humans for routine changes

- deployment frequency increases because changes get smaller

- change fail rate should stay flat or improve because verification becomes deeper and more systematic

- time to restore improves because rollbacks and telemetry are first-class

If your change fail rate spikes, your “evidence” is not actually predictive. Usually the fix is: more invariants, better canary analysis, and better fuzzing coverage, not “go back to reading diffs.”

Practical next steps to implement Evidence-First Delivery

- Add an in-repo intent template (

plan.yaml) for non-trivial changes. - Start with a simple risk score (subsystem tags + diff size + dependency changes).

- Make CI produce an evidence bundle artifact.

- Require status checks via rulesets for the evidence bundle job.

- Introduce policy-as-code (OPA) for “deny if missing required evidence.”

- Add contract testing for external service boundaries (Pact). (Pact Docs)

- Add property-based tests for your most failure-prone pure functions (Hypothesis or equivalent). (Hypothesis)

- Add CI-integrated fuzzing for parsers and protocol edges (ClusterFuzzLite model). (Google Online Security Blog)

- Generate SBOMs and start signing build outputs (cosign) as your supply chain baseline. (CISA)

- Move risky changes behind feature flags and canary them with automated rollback thresholds. (martinfowler.com)

Evidence-First Delivery as a First Class Principle

Line-by-line PR review should stop being your primary safety mechanism. It does not scale, and it does not match how systems fail.

The scalable replacement is risk-gated, machine-verifiable evidence plus progressive delivery. When done well, humans spend their time where it matters: defining constraints, protecting interfaces, and judging tradeoffs. Everything else becomes a policy-enforced, attestable pipeline.

If you want, share your current stack (GitHub/GitLab, languages, deploy model, services vs monolith). I can adapt EFD into a concrete implementation plan with a sample evidence bundle schema and a starter OPA policy.

Discover more from John Farrier

Subscribe to get the latest posts sent to your email.